Image Generated Using ChatGPT Images 2.0

Shift From Monolithic Compute To Collaborative Systems

For decades, compute scaling followed a predictable path: pack more transistors onto a single die, increase frequency, and extract higher performance from a centralized processor. That model is now structurally breaking down. The limiting factor is no longer just transistor density, but the inefficiency of mapping increasingly diverse workloads onto a uniform compute architecture.

Artificial intelligence, large-scale data processing, and real-time systems clearly expose this mismatch. Matrix-heavy operations, sparse data movement, control logic, and memory access patterns all stress different parts of a system in fundamentally different ways. Forcing these onto a single processor type leads to underutilization, power inefficiency, and memory bottlenecks. As a result, performance scaling is constrained not only by compute capability but also by how effectively the system aligns hardware with workload characteristics.

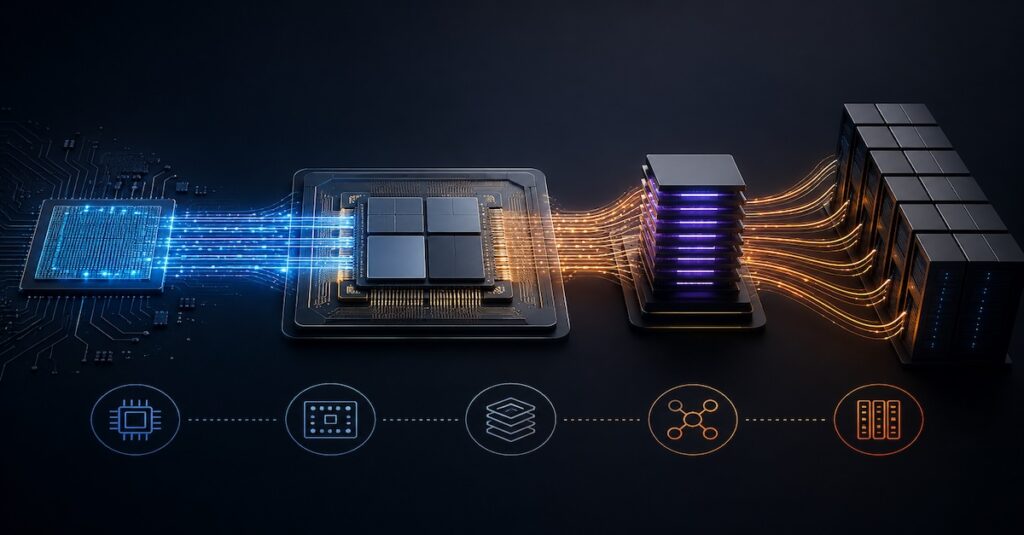

This is where co-compute systems emerge as a necessary architectural response. Instead of scaling a single engine, the system is decomposed into multiple specialized compute elements, each optimized for a specific class of operations. CPUs manage sequencing and control flow, GPUs handle throughput-oriented parallelism, AI accelerators execute dense numerical kernels, and dedicated engines offload functions such as networking, compression, or security.

The critical shift is that system performance is no longer determined by the peak capability of any individual block, but by the efficiency of their interactions. Data movement, synchronization, and memory locality become first-order design constraints. In this context, the role of the semiconductor industry expands significantly. It must now enable not just faster compute units, but tightly integrated systems where hete

Heterogeneous Integration As The Foundation

Heterogeneous integration is really a response to a problem the industry can no longer ignore. Building larger monolithic dies is becoming increasingly impractical. Yield drops quickly with size, reticle limits cap how much you can integrate, and pushing every function onto the most advanced node simply does not make economic sense.

Breaking the system into chiplets is a more pragmatic approach. Different parts of the system have very different needs. High-performance computing benefits from advanced nodes, but I/O, analog, and memory interfaces often do not. Keeping those on mature nodes is not just cheaper, it is often the better engineering choice.

What makes this approach powerful is the flexibility it introduces. Instead of redesigning an entire SoC, teams can reuse and recombine chiplets depending on the application. That becomes especially important in areas like AI infrastructure, where workload requirements are still evolving and rarely uniform.

But this shift comes with its own tradeoffs. Once you split the system across multiple dies, the challenge moves to how well those dies work together. Latency, bandwidth, and power across die-to-die links start to define system performance more than the individual blocks themselves.

This is where the industry is now focused. The problem is no longer just building better chips, but making multiple chips behave like one system. In many ways, scaling has moved up a level, from transistors to integration.

Advanced Packaging As The New System Layer

Up to this point, it is easy to think of co-compute as just an architectural shift, but in reality, it is the result of multiple layers of innovation moving together. From process technology to packaging and test, each layer is being reworked to support disaggregated systems.

What makes this interesting is that no single layer solves the problem on its own. The system only works when all of them are aligned around data movement, integration, and scalability. The table below breaks down how these different technology layers contribute to the practicality of co-compute systems.

| Technology Layer | Key Innovation | Role In Co-Compute Systems | System-Level Impact |

|---|---|---|---|

| Process Technology | Advanced Nodes (5nm and below) | Enables high-performance compute chiplets | Improves performance per watt |

| Chiplet Architecture | Die Disaggregation | Modular integration of specialized compute elements | Enhances flexibility and scalability |

| Interconnect | Die-to-Die Interfaces, High-Speed Links | Enables low-latency communication between compute units | Reduces data transfer bottlenecks |

| Packaging | 2.5D, 3D Stacking, Hybrid Bonding | Physically integrates chiplets with high bandwidth density | Shifts system integration into the package |

| Memory Integration | HBM, Near-Memory Compute | Places memory closer to compute elements | Improves bandwidth and reduces energy per bit |

| Test And Manufacturing | Advanced Test Flows, Yield Analytics | Ensures quality and scalability of complex multi-die systems | Enables reliable high-volume production |

What stands out is that the industry is no longer optimizing in isolation. Improvements in process technology, packaging, or memory only translate into system gains when they are tightly coordinated. This is a clear shift from earlier generations, where scaling at the transistor level could drive most of the value.

In co-compute systems, the bottleneck shifts across layers, sometimes it is compute, sometimes interconnect, and often data movement. The real challenge, and opportunity, is in how well these layers are co-designed to behave as a single, efficient system.

Data Movement, System Co-Design, And The Road Ahead

At some point, adding more compute stops helping if the data cannot keep up. That is exactly where computing systems are today. Moving data between compute engines, memory, and storage is often more expensive in both power and time than the computation itself. As systems scale, this imbalance becomes more visible. Interconnect design, memory placement, and workload orchestration begin to matter as much as the compute blocks themselves.

This is pushing the industry toward tighter co-design. Hardware and software cannot be developed in isolation. System architects, chip designers, and software teams are working together earlier in the cycle to shape how workloads are mapped onto hardware. Memory hierarchies are being redesigned to reduce unnecessary data movement, interconnect fabrics are evolving to scale with system size, and software frameworks are improving the efficient use of heterogeneous resources.

Looking ahead, this trend will continue to accelerate. Systems will become more disaggregated, but also more specialized. Chiplet ecosystems, standardized die-to-die interfaces, and AI-driven design flows are shaping how these systems are built. The role of semiconductor companies is expanding in the process. It is no longer just about delivering a chip, but about enabling a complete compute platform that can scale across workloads and deployments.

In this context, the definition of scaling itself is changing. The boundary between chip, package, and system is becoming less distinct. Performance gains are coming less from shrinking transistors and more from how effectively systems are integrated. Co-compute is one of the clearest indicators of this shift.