Image Generated With GPT Image 2.0

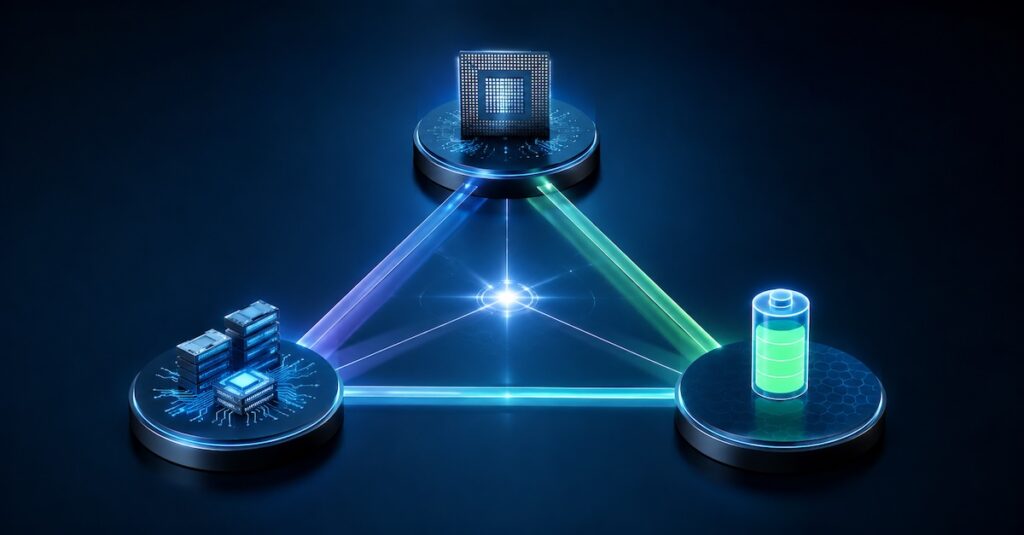

System-Level Scaling Beyond Moore’s Law

The semiconductor industry is moving beyond traditional transistor scaling. Rising fabrication costs, power density limits, and reticle constraints are making monolithic scaling increasingly difficult. Instead of relying only on smaller transistors, the industry is shifting toward system-level integration through chiplets, heterogeneous architectures, and advanced packaging.

Technologies such as 2.5D interposers, fan-out redistribution layers, and three-dimensional stacking are now central to performance scaling. These approaches improve bandwidth density, reduce latency, and enable tighter integration between compute and memory. Scaling is no longer defined only by the die, but by the efficiency of the entire package architecture.

As system-level integration becomes the foundation of semiconductor scaling, another challenge is becoming equally important: efficiently moving and processing the massive volumes of data generated by modern workloads. The industry is no longer optimizing only for transistor density or compute throughput. It is increasingly optimizing for data flow efficiency across the entire system stack.

Data-Centric Architectures Reshaping Compute

Modern workloads are also increasingly limited by data movement rather than raw compute capability. Artificial intelligence, edge computing, and hyperscale systems require architectures optimized for moving, storing, and processing data efficiently.

This shift is changing how semiconductor systems are architected at both the silicon and package levels. Instead of treating memory as a separate subsystem, modern designs are bringing compute closer to memory through high-bandwidth integration, localized acceleration, and advanced interconnect architectures. The goal is to reduce latency, lower energy consumed per bit transferred, and improve overall system throughput for data-intensive workloads.

| Architecture Trend | Traditional Compute Approach | Data-Centric Compute Approach | Key Benefit |

|---|---|---|---|

| Compute And Memory Relationship | Separated compute and memory blocks | Compute placed near or within memory | Reduced latency and power |

| Data Movement | Frequent long-distance transfers | Localized data processing | Higher energy efficiency |

| Performance Bottleneck | Compute throughput limited | Memory bandwidth optimized | Faster AI and analytics workloads |

| Packaging Requirement | Standard package integration | Advanced packaging with high-density interconnects | Improved bandwidth density |

| Workload Optimization | General-purpose processing | Workload-aware acceleration | Better efficiency for AI and edge computing |

| System Design Focus | Transistor scaling | Data flow optimization | Balanced system-level performance |

This shift is also driving adoption of near-memory computing, in-memory processing, and tightly coupled memory hierarchies. Advanced packaging enables these architectures by shortening communication paths between memory and compute. In many systems, memory proximity is becoming a larger differentiator than transistor density itself.

Yield Engineering As A Competitive Advantage

As packaging complexity increases, yield optimization becomes more important to profitability and scalability. Yield is no longer limited to wafer fabrication alone. It now includes assembly precision, interconnect reliability, thermal integrity, and package-level validation.

To manage this growing complexity, manufacturers are increasingly using real-time analytics, defect pattern analysis, and machine learning-driven process monitoring to improve production efficiency. In chiplet-based systems, final package yield depends heavily on both die quality and assembly execution, making test and manufacturing intelligence critical differentiators.

As advanced packaging and heterogeneous integration continue to evolve, the role of semiconductor test is also expanding across the entire product lifecycle. Traditional wafer sort and final test methodologies are transitioning into multi-stage validation flows that include known good die screening, die-to-die interconnect validation, package-level stress testing, and system-level reliability assessment. As architectures become more modular, ensuring interoperability and long-term reliability across multiple dies becomes essential for maintaining production quality.

Supporting this transition is a rapidly growing dependence on manufacturing data as a foundation for yield learning and process optimization. Inline metrology, electrical test data, thermal monitoring, and assembly analytics are increasingly connected through centralized data platforms that enable faster root-cause identification and predictive decision-making. This data-driven approach allows manufacturers to reduce yield excursions, accelerate ramp cycles, and improve overall cost efficiency across advanced packaging production flows.