Image Generated Using Nano Banana

Energy As The New Scaling Metric

A new scaling metric is emerging in AI and semiconductors: energy per prompt. It represents the amount of electrical energy required to generate one meaningful AI response. Unlike traditional metrics that focus on transistor density or performance, long guided by ideas like Moore’s Law, this metric shifts attention to a single unit of useful output. It reframes progress around a simple question: how much energy does it take to deliver intelligence once?

This shift is being driven by how AI is used today. Modern systems are no longer evaluated only by peak capability, but by how efficiently they operate at scale. Every query, every interaction, and every agent action generates a prompt. When these prompts scale into millions or billions per day, even small inefficiencies in energy usage become significant at the system level.

Energy per prompt makes this scaling visible. It connects what happens deep inside semiconductor devices and system architecture to real-world outcomes like cost, power consumption, and infrastructure demand. Instead of abstract performance gains, it provides a direct measure of how efficiently intelligence is delivered.

As a result, energy is no longer just a constraint to manage. It is becoming the primary metric of scaling. The next phase of progress in AI and semiconductors will not be defined only by faster or denser systems, but by how effectively they convert energy into useful computation.

What Energy Per Prompt Captures

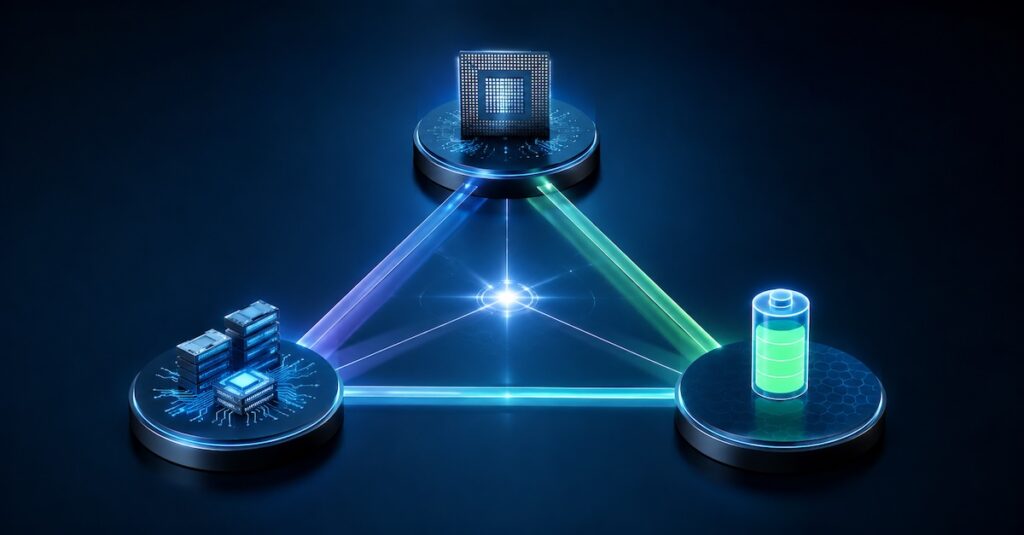

Energy per prompt is not a chip-level metric. It is a system-level measure. This measure captures the total energy consumed across the entire stack required to generate a response. It includes compute in AI accelerators and CPUs, memory access, data movement, interconnects, software execution, and even cooling and infrastructure overhead. By combining all these elements, it reflects the true energy cost of delivering intelligence.

This makes it fundamentally different from traditional metrics that focus on individual components. A highly efficient chip alone does not guarantee low energy per prompt. If data movement is high or system utilization is poor, total energy can remain high. In modern AI systems, a significant portion of energy is spent moving data rather than computing. System design becomes as important as silicon design.

As a result, energy per prompt shifts the focus from peak performance to end-to-end efficiency. It emphasizes how well the entire system works together to minimize energy usage per response. This provides a more realistic view of efficiency in large-scale AI deployments.

Why This Metric Matters Now

AI is scaling at an unprecedented rate. From user queries to autonomous agents, the number of prompts generated daily is growing rapidly. At this scale, even small inefficiencies in energy usage per prompt can translate into significant increases in total power consumption and operational cost. What once seemed negligible at low volume becomes a dominant factor at scale.

To understand this shift, it helps to compare how traditional metrics differ from energy per prompt:

| Metric | What It Measures | Limitation At Scale |

|---|---|---|

| Performance (FLOPS) | Raw compute capability | Does not reflect real energy cost per task |

| Latency | Time to generate a response | Ignores energy efficiency |

| Power (Watts) | Instantaneous energy consumption | Lacks connection to useful output |

| Throughput | Number of prompts per second | Can hide inefficiencies at system level |

| Energy Per Prompt | Energy required per AI response | Directly reflects efficiency and cost at scale |

This comparison highlights why energy per prompt is becoming critical. It directly ties system behavior to real-world impact and to the energy required to produce value. As AI systems expand, optimizing for this metric enables better control over cost, infrastructure demands, and sustainability.

Instead of focusing solely on speed or capacity, the industry is beginning to prioritize the efficiency with which each response is generated, making energy per prompt a central metric for scaling AI systems.

How This Changes Semiconductor And System Design

Energy per prompt changes how we design semiconductors. The goal shifts from peak performance to minimizing energy for each response. Every design decision at the chip, package, system, and software level must focus on energy efficiency.

This focus on energy efficiency closely informs decisions at the silicon level. Here, architecture choices become critical. Specialized accelerators, efficient data paths, and optimized compute units all contribute to reducing unnecessary energy consumption. Meanwhile, memory hierarchy plays an equally important role. In many AI workloads, moving data consumes more energy than processing it, so data locality and access patterns become key design considerations.

Extending beyond the chip, packaging and interconnect technologies also shape overall energy efficiency. Advanced packaging approaches like chiplets and high bandwidth memory reduce the distance data needs to travel, lowering energy per operation. In parallel, software and scheduling layers determine how effectively hardware is utilized. Poor utilization can increase energy per prompt even if the hardware itself is efficient.

In summary, the energy-per-prompt metric demands a coordinated approach at every level. Efficiency can no longer be achieved in isolation; alignment across design, manufacturing, and system operation is essential. The shared objective is to reduce the energy required to generate each unit of intelligence.