Image Generated Using Nano Banana

Defining The Shift

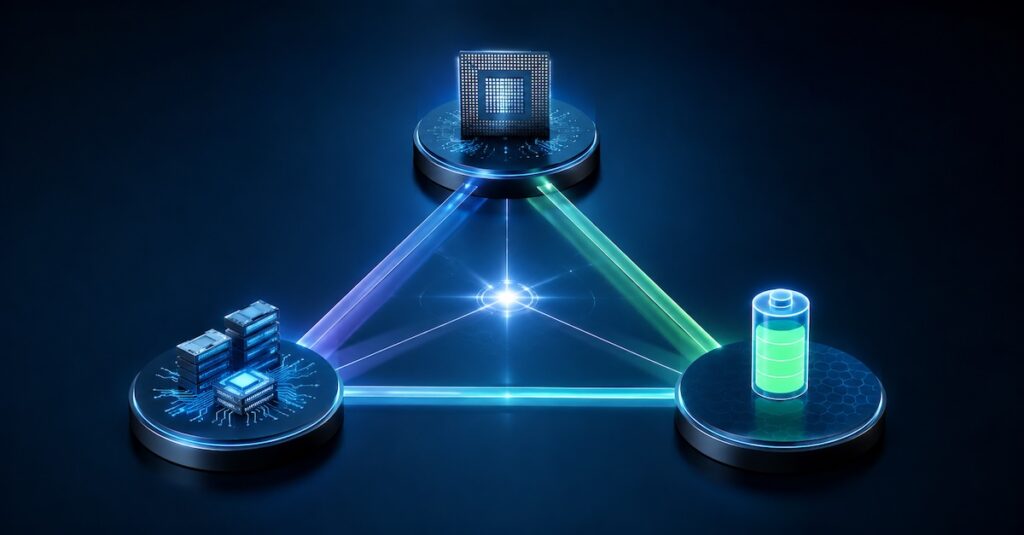

The semiconductor industry is no longer driven by a single scaling vector. As traditional transistor scaling slows, performance, efficiency, and system capability are now achieved through three distinct but interconnected approaches: Scale-Up, Scale-Out, and Scale-Across.

Together, they form a scaling trilemma in which each path offers advantages but imposes constraints.

Scale-Up focuses on maximizing capability within a single silicon boundary by increasing transistor density, integrating more functionality, and leveraging advanced nodes. This approach delivers high performance but faces growing challenges in yield, power density, and cost.

Scale-Out expands capability by distributing workloads across multiple chips or systems. It underpins modern cloud and AI infrastructure but introduces bottlenecks related to interconnect bandwidth, latency, and data movement.

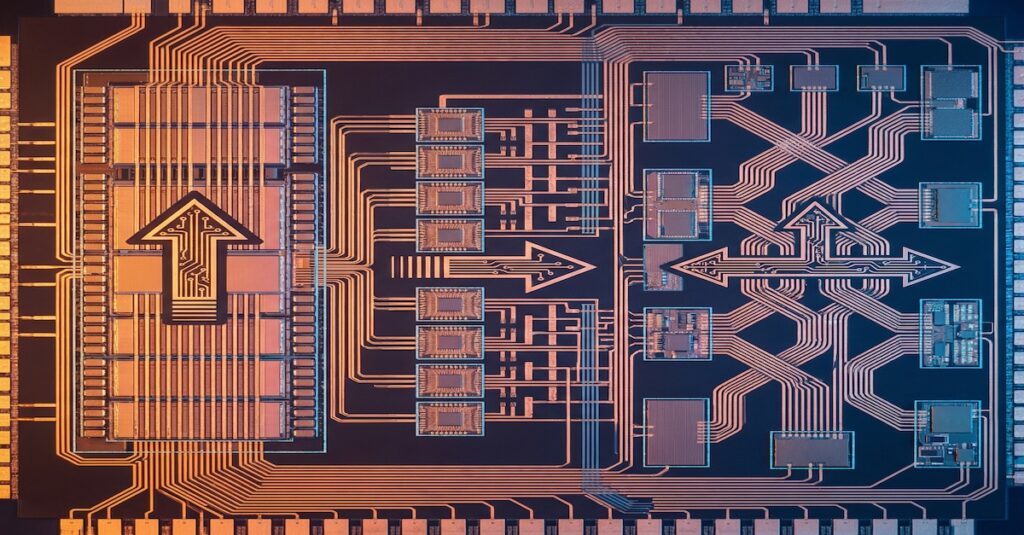

Scale-Across enables scaling through heterogeneous integration, combining multiple specialized dies and components into a unified system. This approach offers flexibility and modularity but significantly increases the complexity of integration, validation, and testing.

The result is a multidimensional scaling landscape where no single approach dominates, and success depends on balancing trade-offs across all three.

Mapping The Modes

As semiconductor systems evolve, product categories now align with specific scaling strategies. This is based on performance goals, power constraints, deployment, and economic factors. Uniform scaling has given way to diverse, product-specific pathways. Now, architectural decisions closely match workload and system requirements.

For example, compute-intensive workloads such as AI training and high-performance computing demand extreme performance density, often pushing designs toward Scale-Up. In contrast, cloud-native and distributed applications prioritize throughput and elasticity, making Scale-Out the dominant approach. Meanwhile, applications requiring functional diversity, modularity, or rapid product iteration, such as automotive, edge AI, and advanced SoCs, are increasingly driven by Scale-Across through heterogeneous integration.

This alignment is not accidental; instead, it reflects a deeper shift in which the scaling strategy is becoming workload-aware and system-driven rather than purely technology-node-driven.

| Scaling Mode | Typical Products | Key Benefit | Primary Bottleneck | Dominant Cost Driver |

|---|---|---|---|---|

| Scale-Up | High-performance CPUs, GPUs, AI SoCs | Maximum performance density | Yield and power limits | Die size and advanced node cost |

| Scale-Out | Data center clusters, AI training farms | Massive parallel throughput | Latency and interconnect limits | Data movement and infrastructure |

| Scale-Across | Chiplet-based systems, heterogeneous SoCs | Flexibility and modular scaling | Integration and validation | Test, packaging, and coordination |

While this table simplifies the landscape, the reality is more nuanced. Modern semiconductor products increasingly blur these boundaries. Many now combine multiple scaling approaches within a single system. For example, a high-performance AI platform may use Scale-Up within each die, Scale-Across through chiplet integration, and Scale-Out across data center clusters.

As a result, selecting a scaling strategy is no longer just about meeting performance targets. It requires optimizing across a multidimensional trade space that balances cost, data movement, integration effort, and time-to-market. Traditional design thinking falls short here. System-level orchestration becomes essential.

Grounding In Practice

Real-world systems rarely rely on a single scaling approach. Instead, they combine multiple strategies to achieve optimal performance and efficiency.

In advanced AI accelerators, Scale-Up is used to maximize compute density within a single die, integrating large numbers of compute cores and high-bandwidth memory. At the same time, Scale-Out connects thousands of such devices across data center networks to enable large-scale model training. Increasingly, Scale-Across is also introduced through chiplet-based designs that separate compute, memory, and IO into modular dies.

In modern high-performance computing systems, clusters of CPU and GPU demonstrate a strong Scale-Out model, but each node itself reflects Scale-Up optimization. Meanwhile, emerging architectures incorporate Scale-Across through advanced packaging and heterogeneous integration to balance performance and cost.

In automotive and edge systems, Scale-Across plays a dominant role by integrating diverse functions such as compute, sensing, and connectivity into compact, modular platforms. These systems may not push extreme Scale-Up, but they rely heavily on integration efficiency and system-level optimization.

These examples illustrate that the trilemma is not about choosing one path, but about orchestrating all three in a coordinated manner.

Balancing The Future

The trilemma reflects a shift from transistor-driven progress to system-level optimization across compute density, distributed execution, and heterogeneous integration.

Each scaling vector introduces distinct constraints. Scale-Up is limited by lithography, yield, and thermal density. Scale-Out is constrained by interconnect bandwidth, synchronization, and latency. Scale-Across adds complexity to the integration, validation, and testing. These constraints interact across the lifecycle, amplifying system-level challenges.

As a result, data flow and decision latency become critical factors, directly impacting yield, performance, and time-to-market. Scaling effectiveness increasingly depends on managing data movement, maintaining system visibility, and enabling closed-loop feedback.

Future systems will be defined by architectures that balance these dimensions, requiring tight integration across design, manufacturing, and system operation. Sustained progress depends on optimizing the trade-offs across Scale-Up, Scale-Out, and Scale-Across.